Follow a structured Research, Plan, Implement, Review, Ship workflow that consistently produces production-grade code.

Boris Cherny, the creator of Claude Code, shared publicly in January 2026 that he shipped 259 pull requests in 30 days. Over 40,000 lines of code, 100% written by Claude Code paired with Opus 4.5.

That number sounds like raw speed. It is not. Cherny runs a deliberate five-phase workflow:

The speed is a side effect of the structure.

Anthropic employees self-reported that 12 months ago, they used Claude in 28% of their daily work and got a +20% productivity boost from it, whereas now, they use Claude in 59% of their work and achieve +50% productivity gains from it on average. This roughly corroborates the 67% increase in merged pull requests.

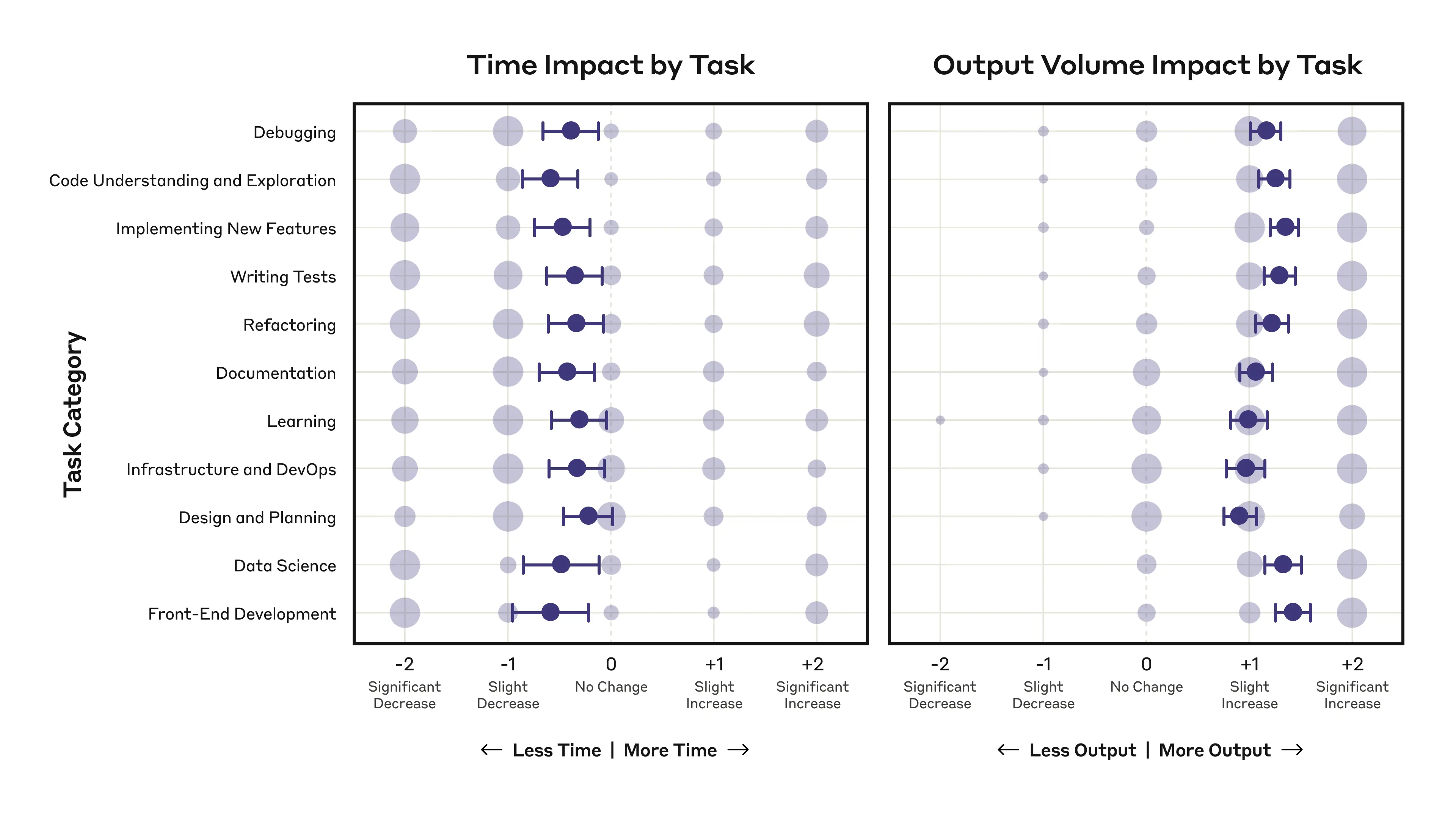

When employees developed strategic AI delegation skills including the above-mentioned structure for various task categories, across almost all task categories, we see a net decrease in time spent, and a larger net increase in output volume:

Without the structure, speed creates debt. GitClear's 2025 analysis of 211 million changed lines of code found that copy-pasted code blocks rose from 8.3% to 12.3% since AI adoption, while refactoring activity collapsed from 25% to under 10%.

Developers are typically duplicating more and cleaning up less.

The code that AI agents produce compiles, passes a quick review, and ships. Then six months later you are debugging a function that exists in three places with slightly different behavior.

A structured workflow does not slow you down. It prevents the rework that slows you down.

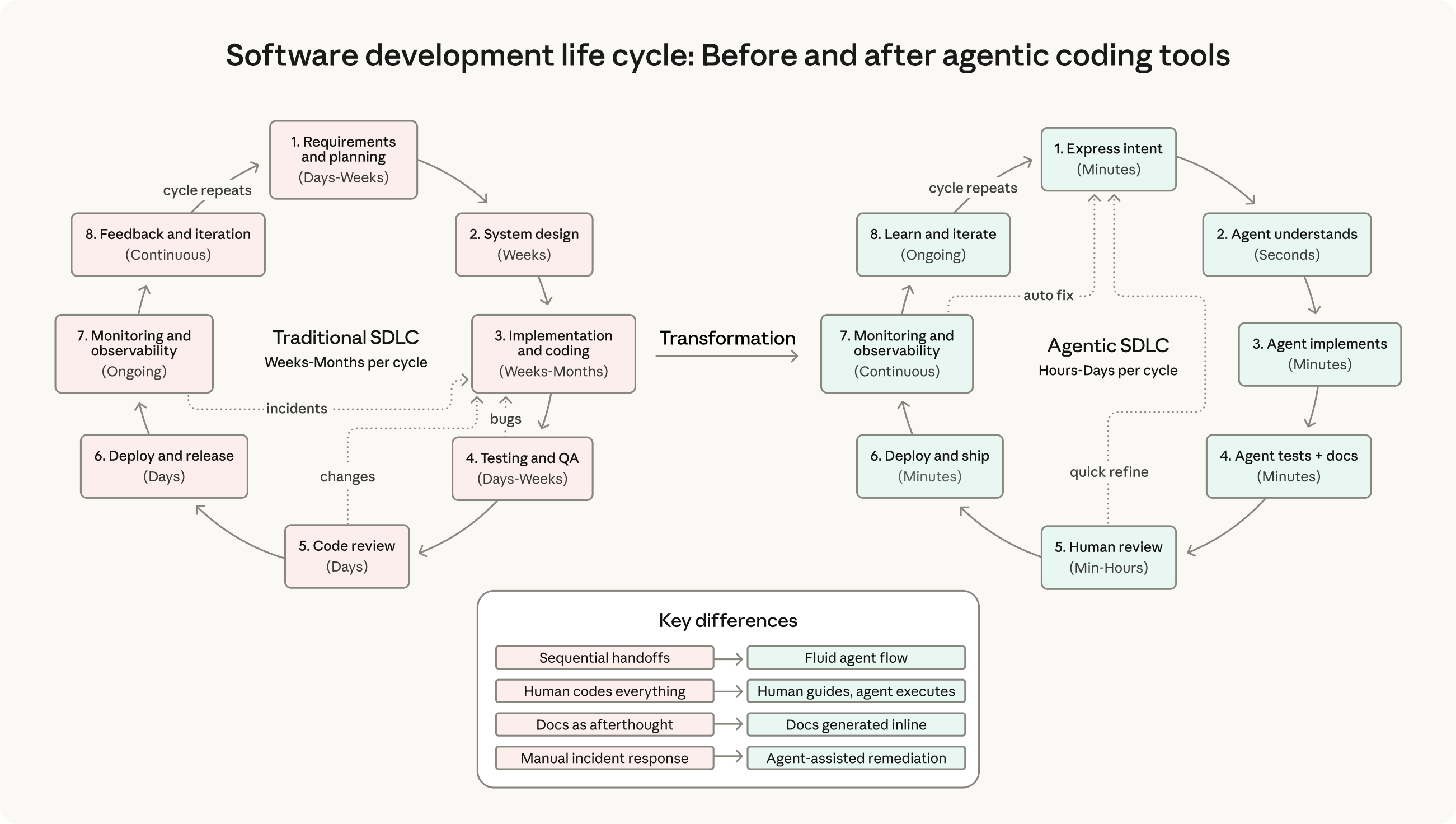

Anthropic's 2026 Agentic Coding Trends Report backs this up with data. The traditional SDLC that took weeks to months per cycle is collapsing to hours and days. The report names the shift: the engineer's role is transforming from implementer to orchestrator.

Your value in agentic AI engineering is architecture design, agent coordination, quality evaluation, and breaking hard problems into smaller ones.

The diagram captures the key differences:

This is the "human guides, agent executes" model, and it only works when each phase has clear entry and exit criteria.

The five-phase workflow exists because skipping phases creates compounding errors. A wrong assumption in the research phase becomes a flawed plan becomes a broken implementation becomes a rejected PR. Each phase is a checkpoint that catches mistakes before they spread.

Separate each phase from each other to avoid solving the wrong problem. Use subagents with fresh context where applicable to bring fresh critical perspective from a model.

In this Chapter, you learn how to build the five-phase agentic AI development workflow and finalizing the feedback loop with the self-healing approach. You set up subagents with clear responsibilities and outcomes that handoffs tasks to each other for the next phase. You bring multiple review and refactoring aspects with official Anthropic skills such as /simplify-changes and /security-review and custom skills like /review-changes, /verify and /wrap-up you'll build.

After this chapter, your workflow will produce production-grade code with no-slop.

Draft - In Progress. This chapter is currently being written. Full content coming soon.

$ cat ./access-status

> You've started this chapter. Sign up to keep your progress and continue where you left off:

Essentials

$97

Chapters releasing as they are written. Your purchase locks in the early access price.

Know a colleague who'd benefit? Share the course and you'll both level up.

Lifetime access · No subscription · Early pricing

7. TDD as the Quality Gate

Use Test Driven Development with Claude Code to give your agent clear specs in the beginning and a clear signal in the end of a task. TDD, runtime logs, test traces, and focused debugging keep your specs on track while you scale.

9. Context and Cost Engineering

Manage Claude Code's context window like a whiteboard. Stay focused, keep it clean, and capture what matters before you wipe it.