Write your CLAUDE.md, create modular rules, and wire up hooks that make verification automatic and deterministic.

Chapter 4 set up your project, configured permissions, and gave Claude the autonomy to work without constant interruption. But nothing stops it from ignoring your conventions, generating duplicated code, and claiming a task is complete when the logic is wrong.

GitClear's 2024 research measured the damage: an 8x increase in code duplication in AI-assisted codebases compared to human-only development.

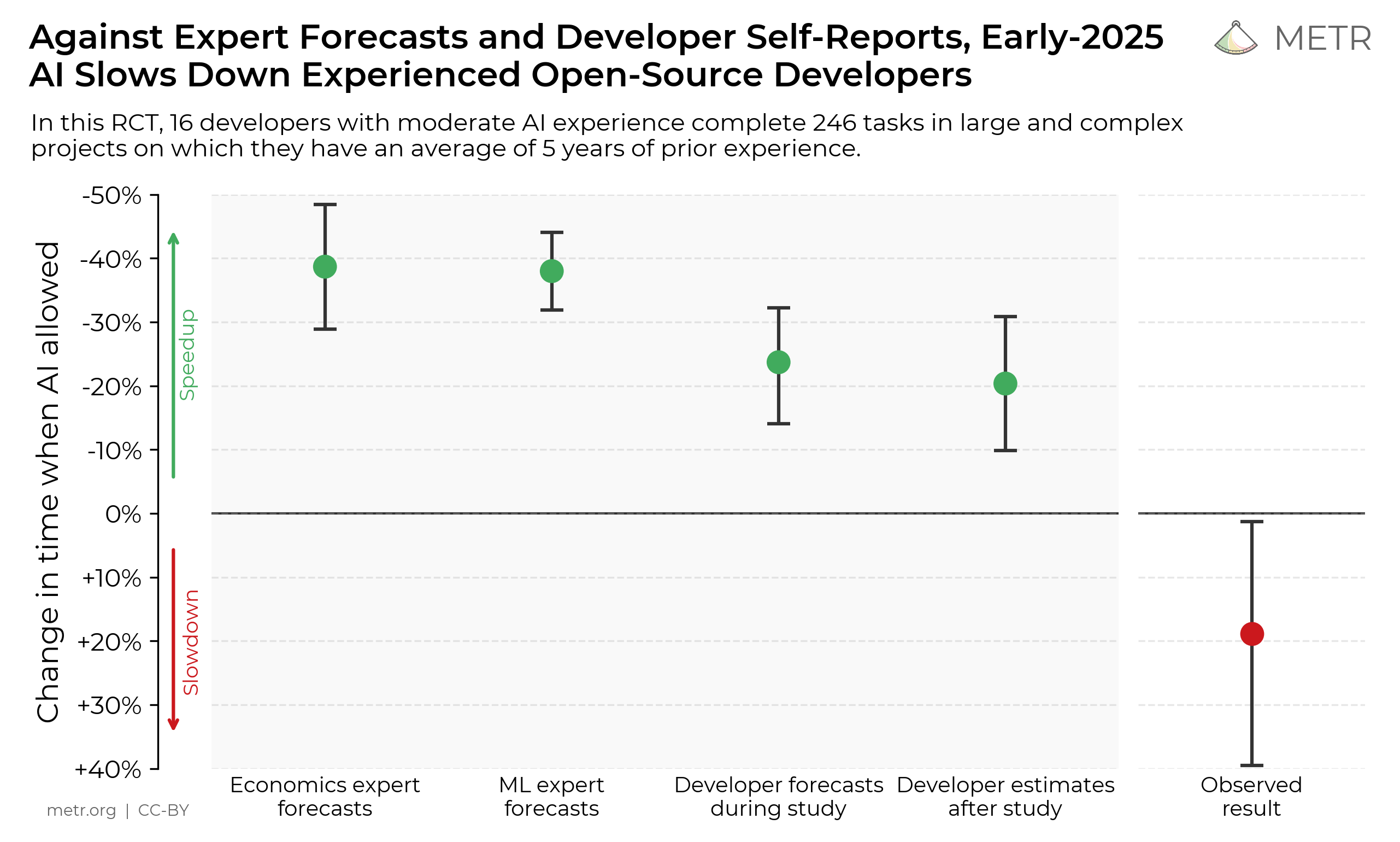

METR's 2025 study found that experienced developers were actually 19% slower on certain tasks with AI assistance. The slowdown came from developers already being so familiar with their own repos (avg. 5 years experience, 1,500 commits) that AI had limited room to help, combined with AI performing poorly in large/complex codebases (avg. 10 years old, >1.1M lines of code). Developers were verifying instead of the agent, and still got slower because AI reliability was too low for the effort to pay off.

Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity

We conduct a randomized controlled trial to understand how early-2025 AI tools affect the productivity of experienced open-source developers working on their own repositories. Surprisingly, we find that when developers use AI tools, they take 19% longer than without—AI makes them slower.

Slop is the industry term for AI-generated code that looks correct at first glance but has logical holes. Andrej Karpathy described the pattern precisely: LLMs "don't manage their confusion, don't seek clarifications, don't surface inconsistencies." They produce plausible code and move on.

Boris Cherny, Claude Code's creator, stated the fix clearly: "Probably the most important thing to get great results: give Claude a way to verify its work. If Claude has that feedback loop, it will 2-3x the quality of the final result."

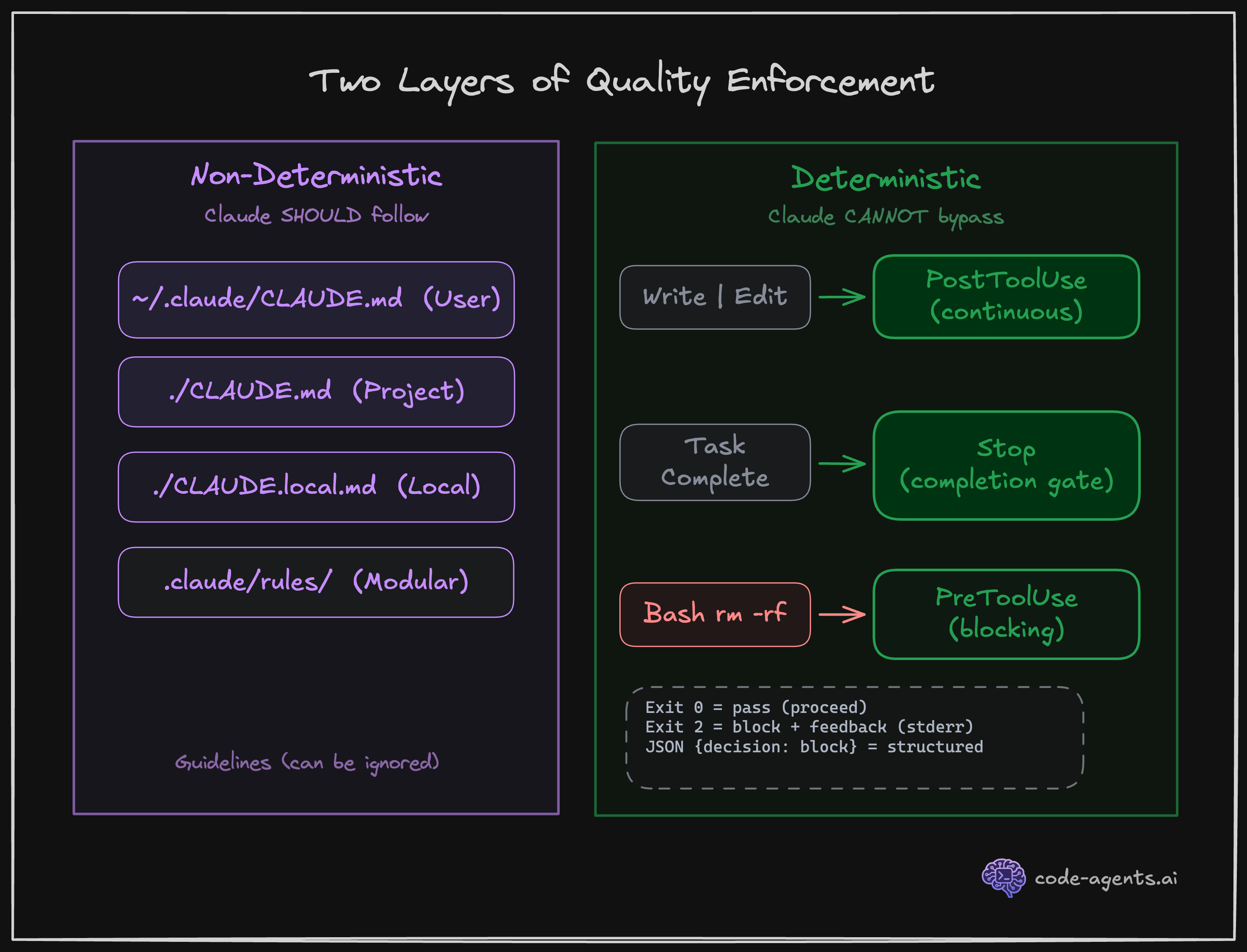

This chapter is the first line of defense towards building that verification system. You will start building guardrails for quality using two layers of enforcement:

Hooks run at the system level. Claude does not decide whether to verify, the system enforces the agent for it.

AI output without verification is a liability, not a feature.

Draft - In Progress. This chapter is currently being written. Full content coming soon.

$ cat ./access-status

> You've started this chapter. Sign up to keep your progress and continue where you left off:

Essentials

$97

Chapters releasing as they are written. Your purchase locks in the early access price.

Know a colleague who'd benefit? Share the course and you'll both level up.

Lifetime access · No subscription · Early pricing

4. Configuring Your First Project

Generate a project idea, scaffold your repo, and configure Claude's permissions and settings so the agent works autonomously on safe operations while blocking dangerous ones.

6. Building a Feedback Loop

Give Claude Code eyes and ears so it can see your app and inspect its runtime, read logs, and verify across desktop and mobile channels.